India’s AI Transparency Mandate & the Global Fight Against Algorithmic Addiction

- Editor

- March 27, 2026

- Artifical Intelligance, Automobile, Business, Companies & Industry, Global Business, Tech & Innovation

- 0 Comments

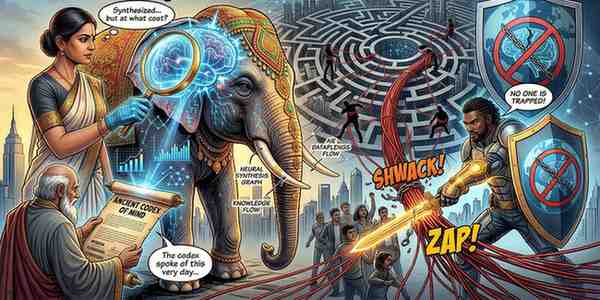

NEW DELHI / LOS ANGELES — The “Wild West” of the digital frontier is being reined in by a pincer movement of aggressive regulation and landmark litigation. This week, the Indian government enacted some of the world’s most stringent AI disclosure laws, while a historic U.S. jury verdict officially branded social media’s core architecture as “negligent by design.”

Segment 1: India’s “Truth in Content” – The 2026 IT Amendment

Effective February 20, 2026, the Ministry of Electronics and Information Technology (MeitY) has activated the IT Amendment Rules, 2026. This legislation marks the end of anonymous synthetic media, targeting the “viral deception” of deepfakes and AI-altered content.

- The Permanent “Synthetic” Badge: All AI-generated or modified content (images, video, and audio) must now carry a prominent, non-removable label. Crucially, these markers must be embedded in the metadata, ensuring the label survives even if the file is downloaded and re-shared across different platforms.

- The 3-Hour Takedown Ultimatum: In a massive escalation, the window for platforms to remove unlawful content—ranging from non-consensual deepfakes to misinformation—has been slashed from 36 hours to just three hours following a government or court order.

- Traceability & Verification: Platforms with over five million users are now required to obtain a user declaration before publishing AI content and must deploy technical verification tools to catch undeclared synthetic media. Failure to comply risks the loss of “Safe Harbor” protection, making platforms legally liable for user posts.

Segment 2: Landmark Verdict – “Addiction by Design” Liability

While India regulates the content, a Los Angeles jury has just ruled on the vessel. In a bellwether case (KGM v. Meta & YouTube), a jury found Meta and Alphabet’s YouTube liable for the mental health crisis of a 20-year-old woman, marking the first time a court has held platforms accountable for addictive product design.

- The $6 Million Referendum: The jury awarded $3 million in compensatory damages and an additional $3 million in punitive damages, finding the companies acted with “malice, oppression, or fraud.”

- The Culprits – Infinite Scroll & Autoplay: The verdict targeted specific features: infinite scrolling, autoplay, and 24/7 push notifications. The jury agreed these were not neutral “features” but engineering choices designed to exploit the dopamine-driven reward pathways in developing brains—analogous to slot machines.

- The Responsibility Split: Liability was not shared equally. The jury assigned 70% of the fault to Meta (Instagram) and 30% to YouTube, citing internal documents showing Meta knew millions of under-13s were on the platform but prioritized engagement over safety.

Segment 3: The “Doom Scrolling” Dilemma – Personal Control vs. Platform Duty

The dual events of 2026 have sparked a global debate on the “New Digital Social Contract.”

- Platform Obligation: The California ruling bypasses Section 230 protections by focusing on the code, not the content. It suggests that if the product itself is defective (i.e., engineered to be addictive), the manufacturer is liable for the resulting harm.

- Personal Sovereignty: Despite the legal victories, the report emphasizes the necessity of user agency. Health experts warn that while labels and slowed-down algorithms help, “digital hygiene” is the only long-term defense against doom scrolling—the compulsive consumption of negative content that algorithms are currently optimized to promote.

- Market Impact: Defense lawyers and tech lobbyists warn of a “tidal wave” of litigation, with over 1,600 similar cases pending from school districts and parents.

Segment 4: Analysis – Transitioning from “Hype” to “Health”

The digital economy is undergoing a fundamental restructuring. Investors are re-evaluating tech valuations as the “growth at all costs” model meets its legal and regulatory ceiling.

| Legacy Model (2020-2025) | The 2026 Pivot |

| Engagement at any cost | Safety-by-Design mandates |

| “Unfiltered” AI content | Mandatory labeling & Provenance |

| 36-hour removal windows | Real-time (3-hour) enforcement |

| Infinite loops allowed | Legal liability for “Digital Drugs” |

The Bottom Line: Between India’s technical mandates and the U.S. court’s moral reckoning, the message to Silicon Valley is clear: The product is no longer “just a platform.” It is a managed environment, and the architects are now responsible for the damage caused by the floorplan.